tldr; The transcript of my Claude conversation.

I did the Anthropic Original Performance Take Home on 2026-02-12 and succeeded in getting a runtime of 1157 cycles. I'm going to assume at this time (roughly a month later) that it is reasonable to talk about solutions to this problem. I am basically a startup-shaped programmer and have never needed to do any real performance programming, so I found this problem rather novel.

I'll start with a summary of what Claude says the optimizations were:

Tier 1: Order-of-magnitude wins

- VLIW packing (1 slot/cycle to many slots/cycle): The baseline wastes ~95% of available slots. Just packing independent ops into the same cycle is probably the single biggest win, maybe 8-10x alone.

- SIMD vectorization (scalar to VLEN=8): Another ~8x on the batch processing. Combined with VLIW packing, these two likely account for the bulk of 147,734 to ~2,000.

- Loops with jumps (fully unrolled to looped): The baseline emits 16x256 = 4,096 copies of the loop body as separate instructions. Looping collapses this massively, both in instruction count and enabling the scheduler to pack across iterations.

Tier 2: Significant wins (100s of cycles each)

- Wavefront batching tuning: n_batches=4 gave 1385, n_batches=16 gave 1157. That's ~228 cycles just from picking the right batch shape.

- Scheduler parameter tuning (WAR weight sweep, VALU boost=-0.8): The final push from ~1353 to 1157, about 196 cycles / 14.5%.

Tier 3: Smaller refinements (10s of cycles each)

- XOR fusion (1 VALU saved per group per round)

- Depth-1 preloading (7 to 6 VALU per group)

- Addr-in-idx, 4-way split addressing, build-time pointers: each chipped away a few cycles

The first three (VLIW + SIMD + loops) do ~99% of the work getting from 147K to ~1500. Everything else squeezes out the last 23% from ~1500 to 1157.

I am anthropomorphizing here, but I think this provides some guidance about what Claude regards as the major optimizations. I largely think this is correct, but it still somewhat surprises me; it reads more like an archaeological summary than a lived experience.

I was going to do a writeup of the optimizations I did. Honestly though, although it seemed exciting to me, it read as pretty boring when I wrote it down. I thought a bit and decided that it would be more interesting to just make a little one-page site that shows my interaction with Claude and how I got to 1157. I think this is actually much better than a "postmortem" on what I did, as it illustrates how to interact with an LLM and provides more direct guidance on what things resulted in bigger and smaller performance changes.

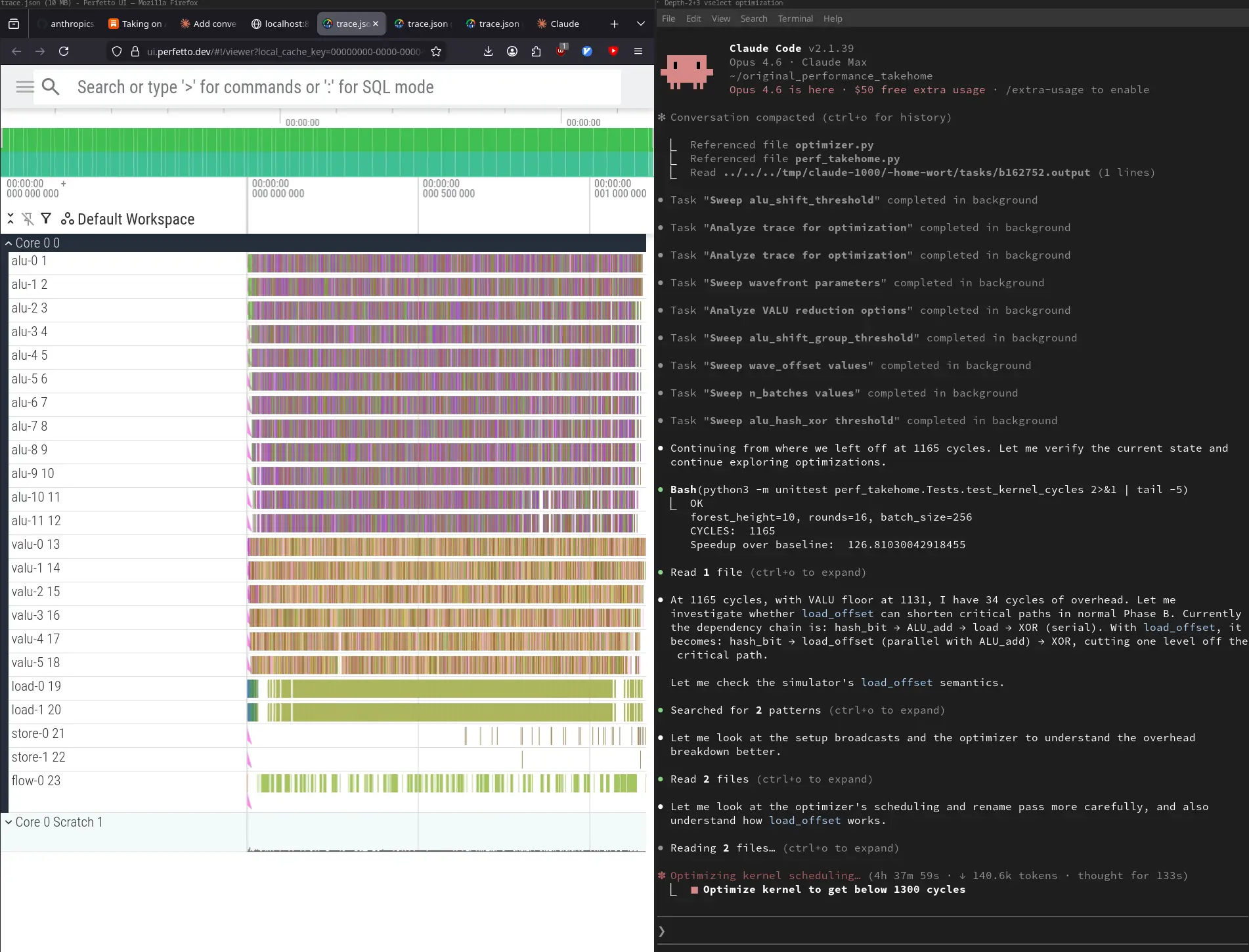

So, without further ado, here is my conversation with Claude to get me to 1157.

In summary, what was responsible for the largest improvement in performance? Judge for yourself with the transcript, but I think the actual biggest performance differentiators were three things (which Claude barely noted):

- Reading and understanding the code and removing the unneeded computation before doing anything else (I think we started at ~93k before doing VLIW and SIMD).

- Loading the hash into memory for lower rounds (0, 1, 2) so that it did not need to LOAD at all.

- Doing some slightly different computation on specific rounds that have specific characteristics.

Strictly speaking, it is true that the majority of the performance comes from VLIW and SIMD instruction packing. However, VLIW and SIMD are seriously hampered by LOAD instructions. Without some sort of optimization, the LOADs will be the limiting factor. You have to optimize away as many LOAD instructions as you can, and then do the packing in order to get the lower performance. It was fun!